Use Windows MPIO for DataCore backend storage connections

When you install DataCore SANsymphony-V (SSV), you will be asked during the setup to allow the installation of some special drivers. DataCore SANSymphony-V needs this drivers to act as a storage target for hosts and other storage servers. Usually you have three different port roles in a DataCore SSV setup:

- Frontend Ports (FE)

- Mirror Ports (MR)

- Backend Ports (BE)

Frontend (FE) ports act only in target-only mode. These ports will be disabled, if you stop a DataCore storage server. Mirror (MR) ports (can) act as target AND initiator. You can set (if you like) a mirror port to a specific mode (target or initiator), but I wouldn’t recomment this. Theoretically you can set one MR port to act as initiator, and a second to target-only mode. If the port is set to target-only, the port is also stopped when the DataCore storage server is stopped. A backend (BE) port acts as initiator for backend storage. Usually the FE ports act as target-only, the MR as target/ initiator and the BE ports as initiator-only. If you use local storage (or SAS connected), there will be no BE ports.

When it comes to ALUA-capable backend storage, DataCore SSV is a bit old-school. But stop! What is ALUA? Asymmetric Logical Unit Access (ALUA), somtimes also known as Target Port Groups Support (TPGS), is a set of commands that allows to define priorized paths for SCSI devices. With ALUA, a dual-controller storage system is capable to “tell” the server which of the controllers is the “owning” controller for a specific volume. All paths are active, but only a subset of paths to a volume is active and optimized. This is often refered as “Active/ Optimized” and “Active/ Non-Optimized”. Using the non-optimized path is not a problem when it comes to write IO (due to the fact that IO must travel the mirror link between the controllers to the other controllers cache), but when it comes to read IO, using a non-optimized path is a mess. And at this point, DataCore SSV is a bit old-school. Even if the backend storage is ALUA-capable (all new storage systems should be ALUA-capable), DataCore SSV doesn’t care about the optimized and non-optimized paths. It simply chooses one paths and uses it. And this can cause performance problems. There are two solutions for this problem:

- You check the chosen backend paths and manually select one (!) active and optimized path.

- You replace the DataCore drivers for the backend ports and use Microsoft MPIO to handle the backend paths.

Solution 1 is okay if you only have a few backend disks. DataCore also uses the Microsoft Windows MPIO framework, so it’s available by default if you install DataCore SANsymphony-V. DataCore allows the usage of 3rd party MPIO software (like EMC PowerPath), but they will never support it. If you have trouble with you backend connection, you will be on your own.

Using a Third Party Failover product

Using a Third Party Failover product (such as EMC’s PowerPath or the many MPIO variants from Storage Vendor) can be used directly on the DataCore Server. In the case where Storage Arrays are attached by Fibre Channel connections, do not use the DataCore Fibre Channel back-end driver when using any Third Party Failover product - use the Third Party’s preferred Fibre Channel Driver instead.

This statement was taken from FAQ 1302 (Storage Hardware Guideline for use with DataCore Servers). Some things won’t work with the native backend drivers. You won’t be able to see the backend paths or monitor the performance using the DataCore SSV GUI. Backend storage that is handled by native drivers and 3rd party MPIO products will be treated as “local” storage. DataCore describes this also in FAQ 1302:

A Storage Array that is connected to a DataCore Server but without using the DataCore Fibre Channel back-end driver can still be used. This includes SAS or SATA-attached, all types of SSD, iSCSI connections and any Fibre Channel connection using the Vendor’s own driver.

Any storage that is connected in this way will appear to the SANsymphony-V software as if it were an ‘Internal’ or ‘direct-attached’ Storage Array and some SANsymphony-V functionality will be unavailable to the user such not being able to make use of SANsymphony-V’s performance tools to get some of the available performance counters related to Storage attached to DataCore Servers. Also some potentially useful logging information regarding connections between the DataCore Server and the Storage Array is lost (i.e. not being able to monitor any SCSI connection alerts or errors directly) and may hinder some kinds of troubleshooting, should any be needed.

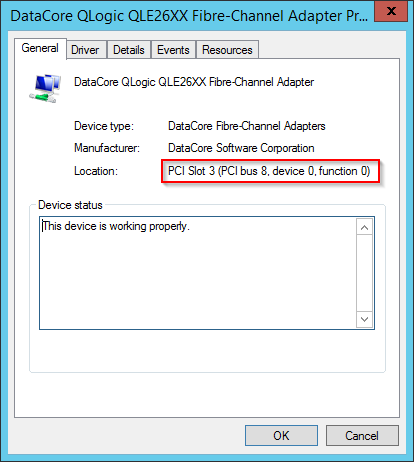

You should replace the backend drivers AFTER the installation of SSV, but BEFORE you map storage to the newly installed storage server. To identify the correct FC-HBA or NIC, take a look at the PCI bus number. You can find this information on the info tab of the server port details in the DataCore SSV GUI. Then check the FC-HBA/ NIC in the Windows device manager for the same PCI bus number.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

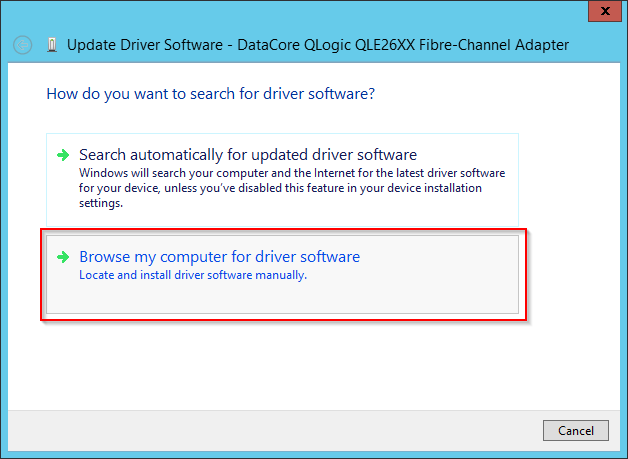

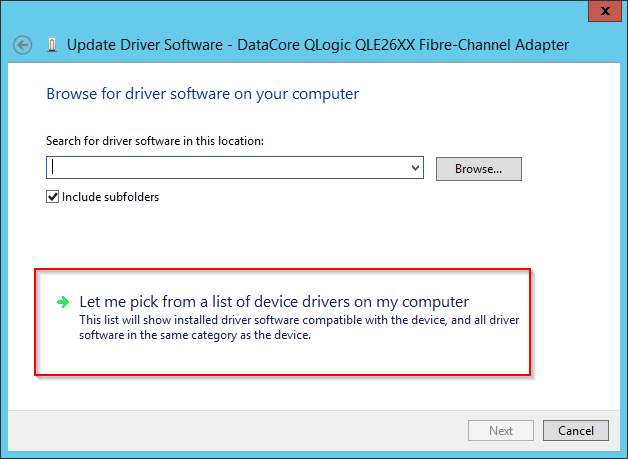

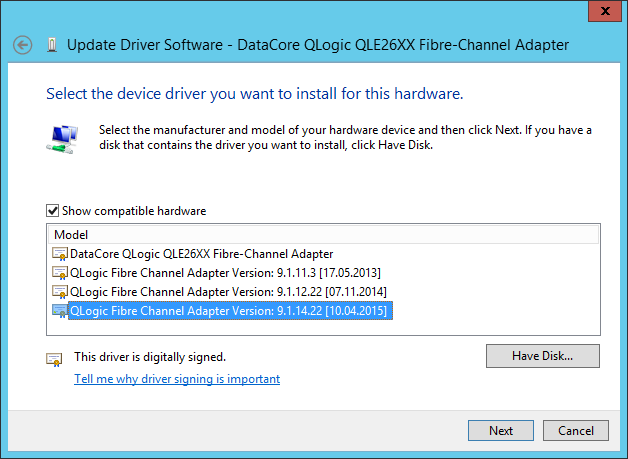

Switch to the “Driver” tab and replace the DataCore driver with the native HBA driver.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

After replacing the drivers you will notice that the backend ports are marked as missing in the DataCore SSV GUI. Simply remove them. You don’t need them anymore. Now you can map storage to the storage server. Make sure that you scan for new devices in the MPIO console or that you run

mpclaim –r –i –a ""

to claim new devices. You can check the path status using the device manager (check the properties of the disk device, then switch to the MPIO tab) or you can use this command

mpclaim.exe –s –d

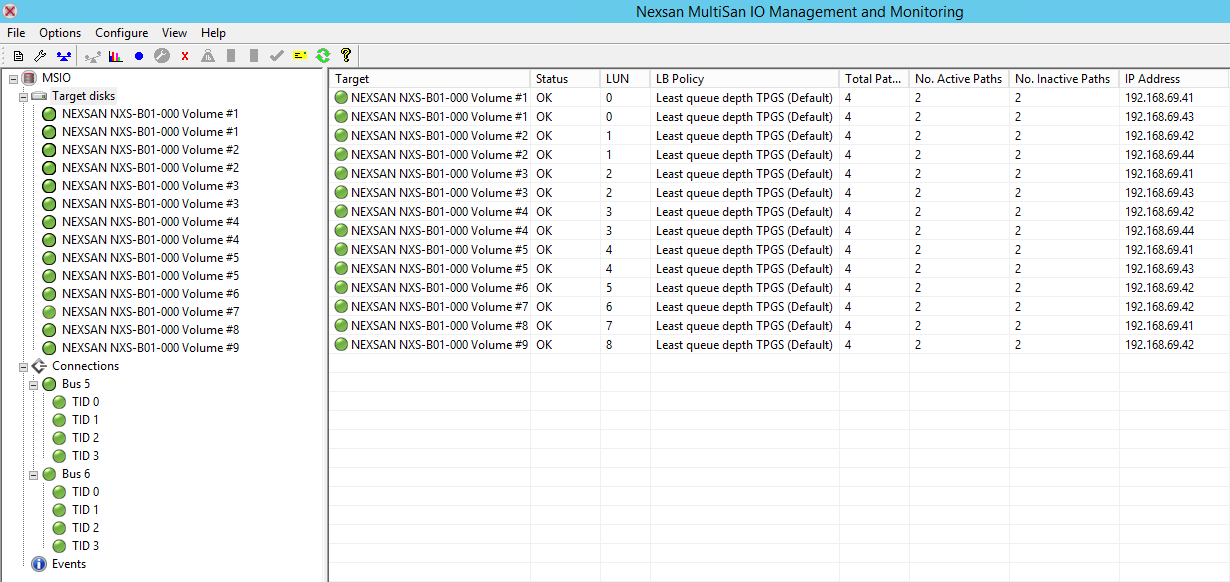

Somethimes the storage vendor offers monitoring tool (this is from Nexsan) that provide information about path state, mpio policy and statistics.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

I can’t see much reasons to use the DataCore drivers and the native backend path handling from SSV. But you should be clear about the limitations when it somes to support!