Deploying HP StoreVirtual VSA – Part II

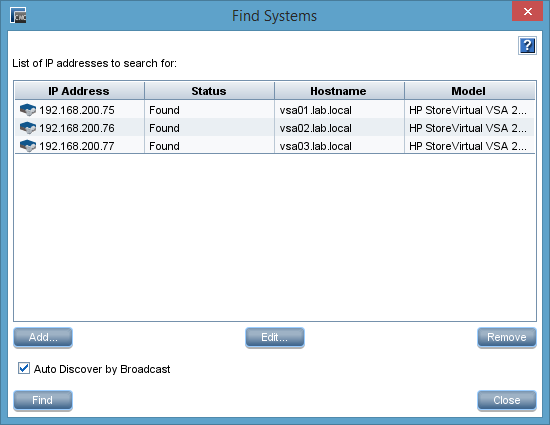

Part I of this series covered the deployment, part II is dedicated to the configuration of the StoreVirtual VSA cluster. I assume that the Centralized Management Console (CMC) was installed. Start the CMC. If you see no systems unter “Available Systems”, client “Find” on the menu and then choose “Find Systems…”. A dialog will appear. Click “Add…” and enter the ip address of one of the earlier deployed VSA nodes. Repeat this until all deployed VSA nodes are added. Then click “Close”. Now you should have all available VSA nodes listed under “Available Systems”.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

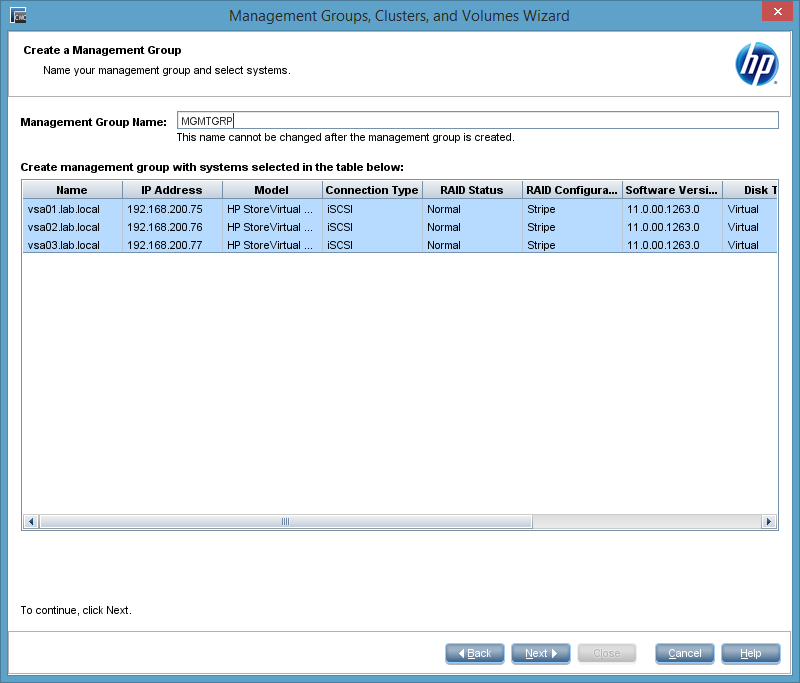

A management group contains virtual and physical StoreVirtual systems that are managed together. Cluster and volumes are defined per management group. Also user accounts are defined per management group. Right click a node and choose “Add to New Management Group…” from the context menu. We will add all three nodes into this new management group.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

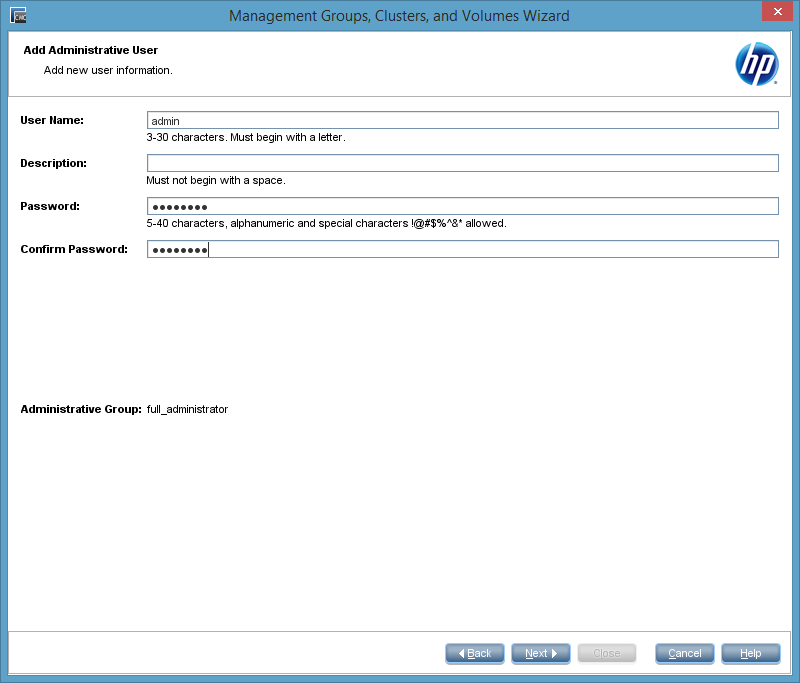

Click “Next”. On the next page of the wizard we have to enter a username and password for a administrative user, that will be added to all nodes.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

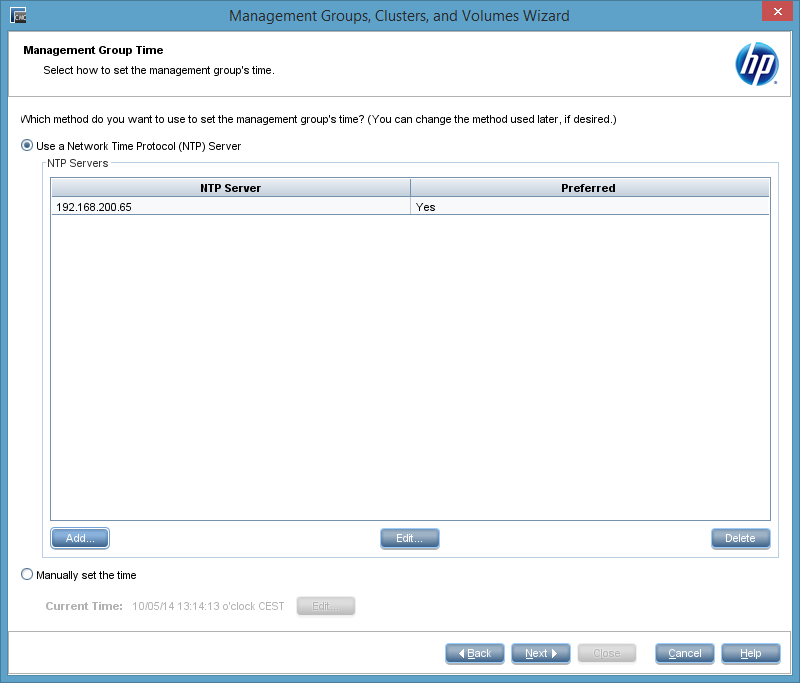

On the next page we have to provide a NTP server. You can set the time manually, but I recommend to use a NTP server. In this case it’s the Active Directory Domain Controller in my lab. Please note, that this server has to be reachable for the VSA nodes! In part I we deployed the VSA nodes with two NICs and with eth0 they can reach the NTP server.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

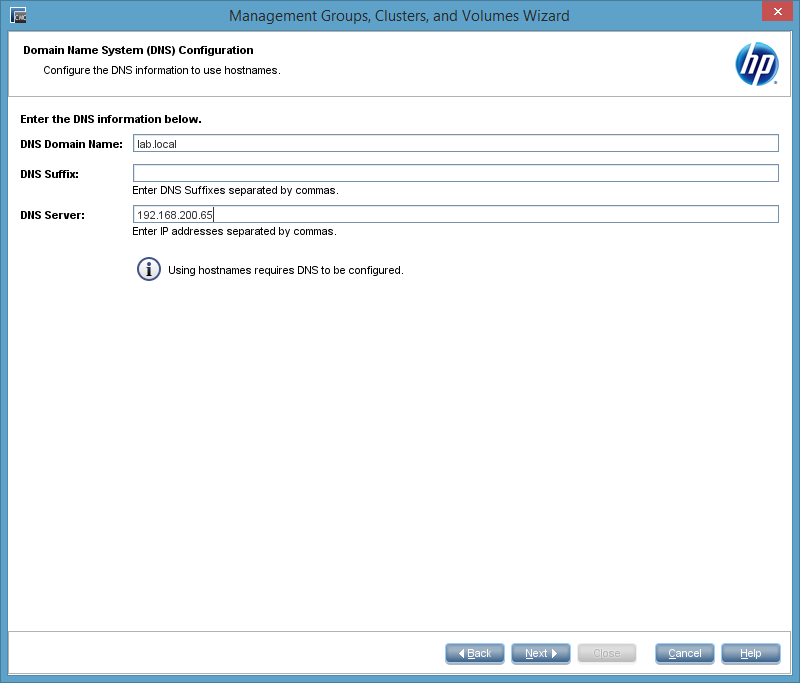

On the next page of the wizard, you have to provide information about the DNS: DNS domain name, additional DNS suffixes and one or more DNS servers. For the DNS servers the same applies as for the NTP server. They have to be reachable for the VSA nodes!

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

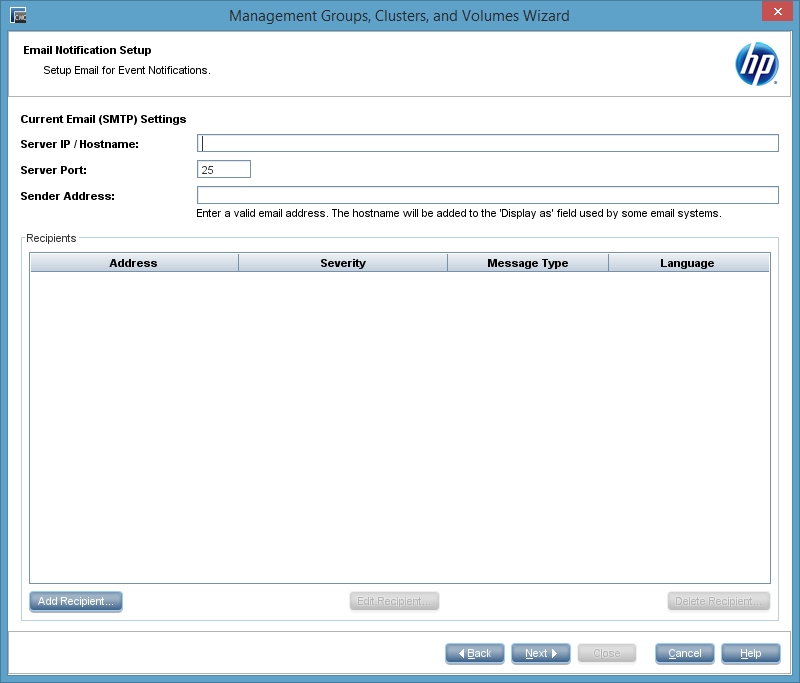

To use the e-mail notification, you have to provide a SMTP server. I don’t have one in my lab, so I left the fields empty. This results in a warning message which can safly be ignored.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

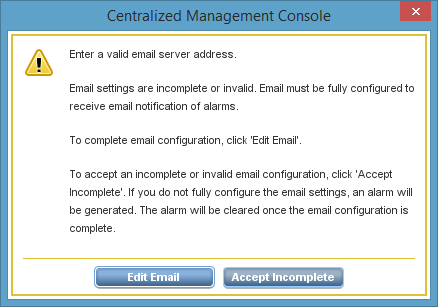

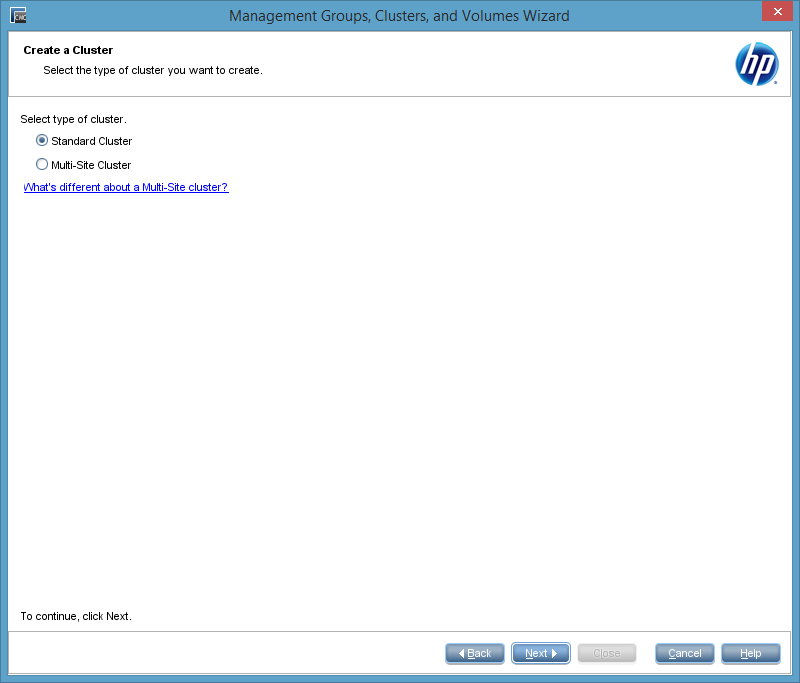

Now comes a very important question: Standard or Multi-Site Cluster? A Multi-Site cluster is necessary if site fault tolerance is needed. It also takes care, that traffic from hosts is only send to the local site. A Multi-Site cluster can span multiple sites and can have cluster virtual ip addresses (cluster VIP) in different subnets. A Multi-Site cluster is needed, if you want to build a vSphere Metro Storage Cluster (vSMC) with HP StoreVirtual. I chose to create a standard cluster.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

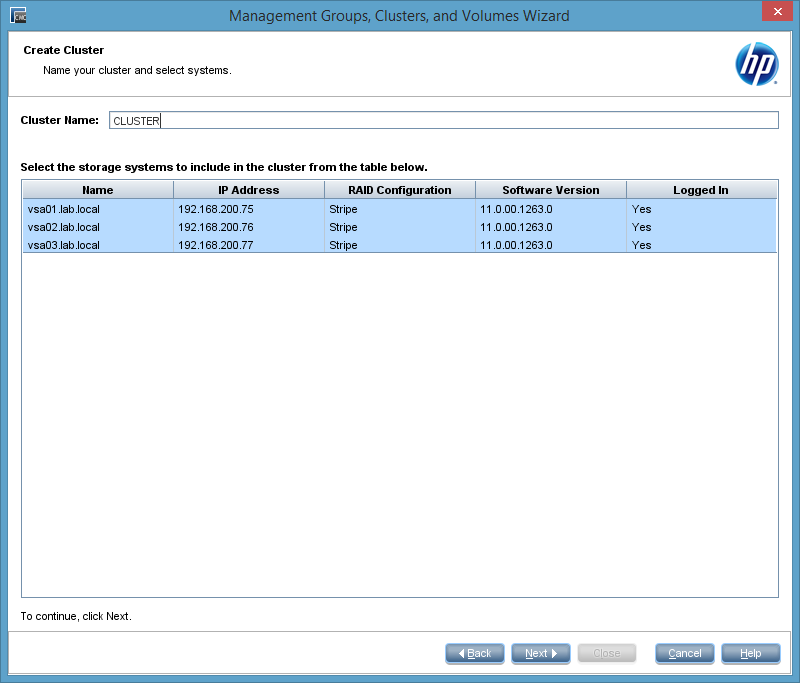

After chosing the cluster type, we have to provide a cluster name and the number of nodes, that should be member of this new cluster.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

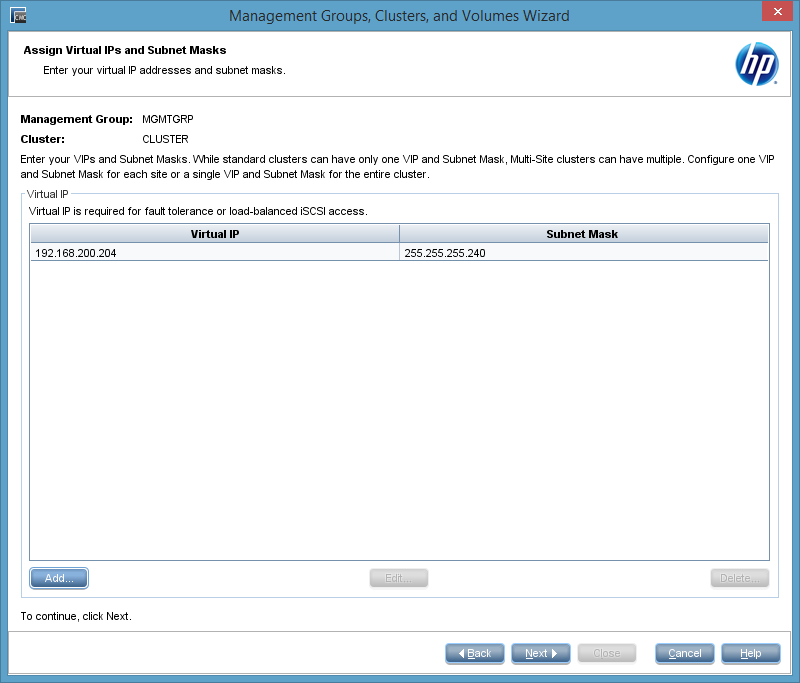

The next step is to configure the cluster virtual ip address (cluster VIP). This ip address has to be in the same subnet as the VSA nodes. This ip address is used to access the cluster. After the initial connection to the cluster VIP, the initiator will contact a VSA node for the data transfer.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

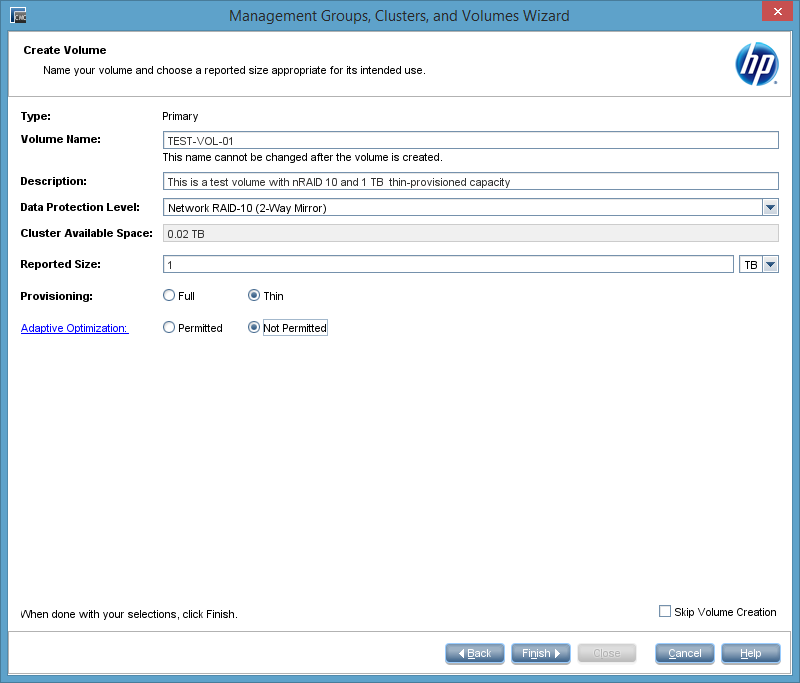

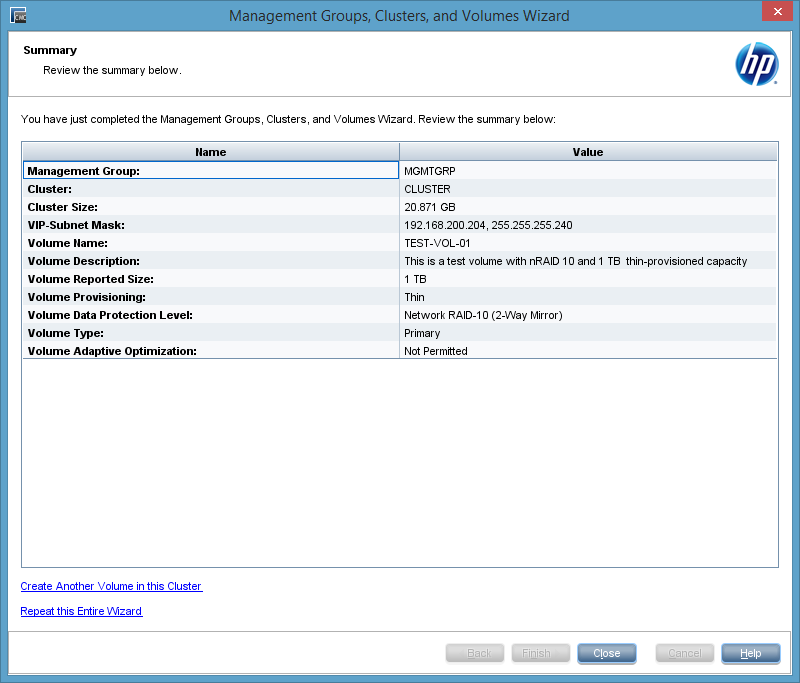

The wizard allows us to create a volume. This step can be skipped. I created a 1 TB thin-provisioned volume.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

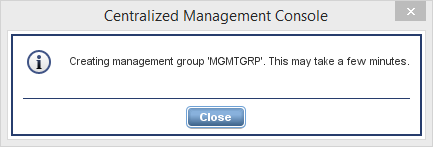

After clicking “Finish” the management group and the cluster will be created. This steps could take some time.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

At the end you will get a summary screen. You can create further volumes or you can repeat the whole wizard to create additional management groups or cluster.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

Congratulations! You have now a fully functional HP StoreVirtual VSA cluster.

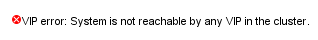

Possible cluster VIP error message

Depending on your deployment, you will get this error message in the CMC:

VIP error: System is not reachable by any VIP in the cluster

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

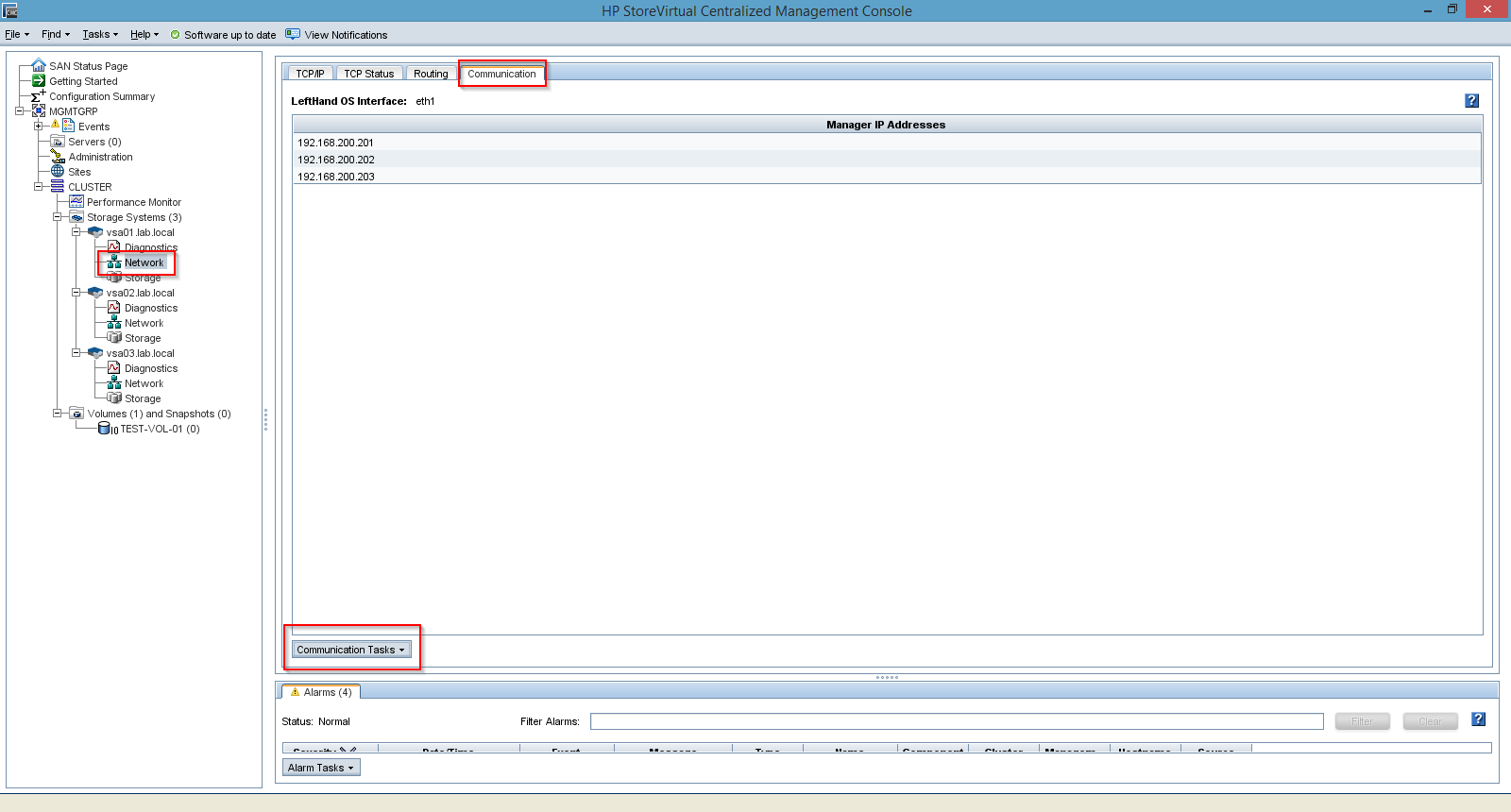

This message occures, if you have deployed your VSA nodes and two NIC and the NIC, that is used for iSCSI, isn’t selected as the preferred SAN/iQ interface. I mentioned in part I that I would refer to the “Select the preferred SAN/iQ interface” option later. This is now. You can get rid of this message, by selecting the right interface as the preferred SAN/iQ interface. Select “Network” on a VSA node, then click the “Communication” tab and choose “Select LeftHandOS Interface…” from the “Communications Tasks” drop-down menu on the bottom of the page.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

The message should disappear after changing this on each affected VSA node.

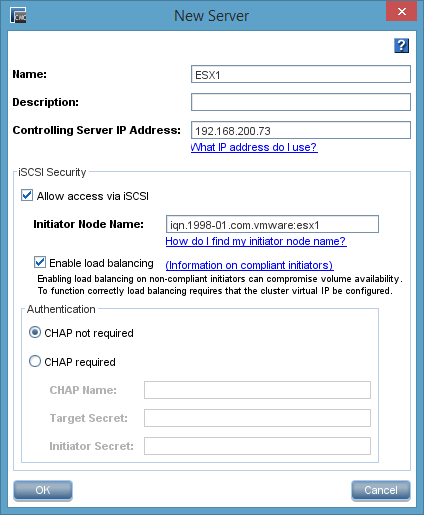

Add hosts

To present volumes to hosts, you have to add hosts. A host consits of a name, an ip address, an iSCSI IQN and, if needed, CHAP credentials. Multiple hosts can grouped to server clusters. You need at least to hosts to build a server cluster. But first of all, we will add a single host:

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

If you want to work with application-managed snapshots, you have to provide a “Controlling Server IP Address”. When working with VMware vSphere, this is the ip address of the vCenter server.

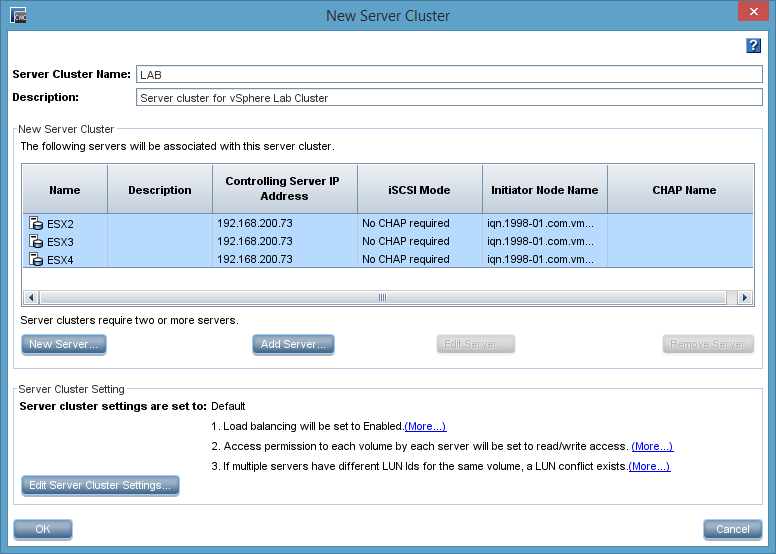

With at least two hosts, you can create a server group. A server group simplifies the volume management, because you can assign and unassign volumes to a group of hosts with a single click. This ensures the consistency of volume presentations for a group of hosts.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

Presenting a volume

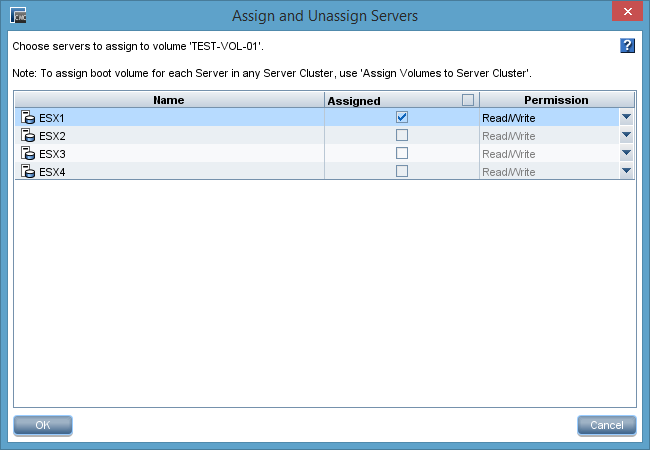

During the initial configuration we created a 1 TB thin-provisioned nRAID 10 volume. To assign this volume to a host, right-click the volume in the CMC and click “Assign and Unassign Servers…". A windows will popup and you can check or uncheck the server, to which the volume should be assigned. A volume can be presented read-only or read-write.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

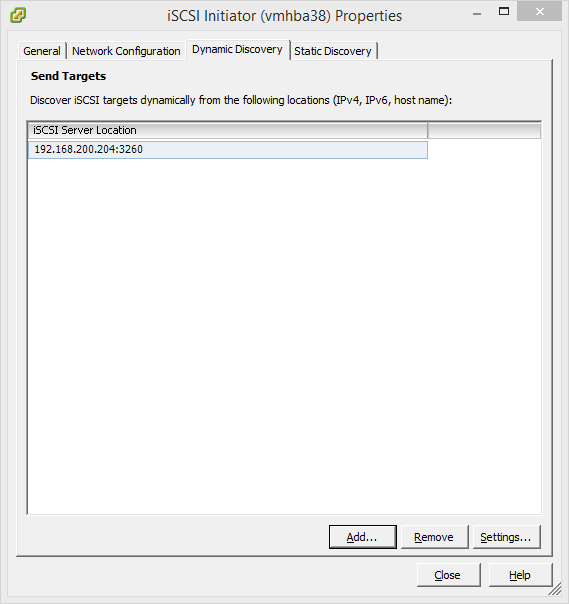

We are nearly at the end. We only have to add the cluster VIP to the iSCSI initiator and create a datastore out of the presented volume.

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

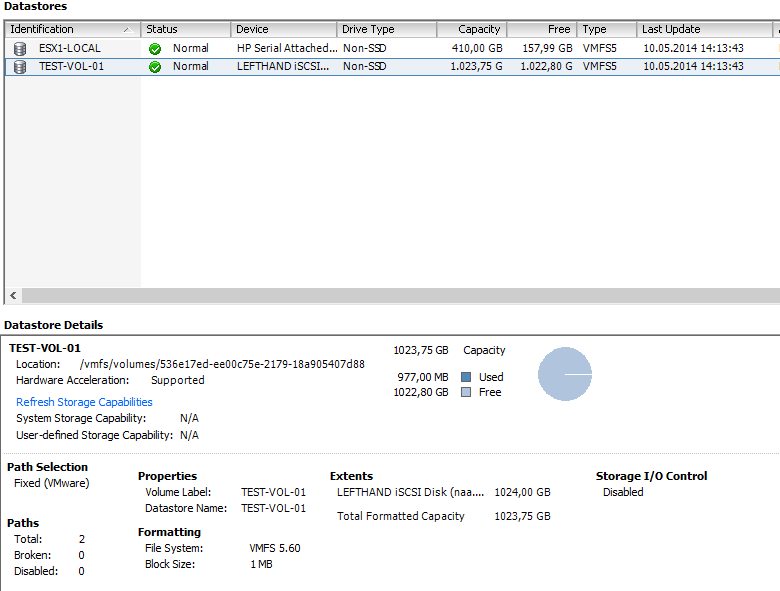

After a rescan a new datastore can be added by using the presented volume. Have I mentioned that each VSA node has only 10 GB of data storage? Thin provisioning can be treacherous… ;)

Patrick Terlisten/ vcloudnine.de/ Creative Commons CC0

Final words

The deployment and configuration is really easy. But this short series only scratched the surface. You can now add more volumes, play with SmartClones and remote snapshots. Have fun!